These days, I see more and more companies building applications as microservices. They leverage Kubernetes to manage these microservices because of its scalability and flexibility.

But as the number of microservices increases and application pods get distributed across multiple clusters and cloud providers, managing and scaling them becomes hard; ensuring proper networking rules, security, and observability among services becomes complex for DevOps and architects.

Service mesh was introduced to address these challenges and complexity while scaling microservices in K8s. It provides a platform for network traffic management and handles service-to-service communication at an infrastructure level.

Istio is one of the most popular service mesh platforms. Let us explore Istio in this article.

Challenges of microservices in cloud and Kubernetes environment

DevOps, architects, and SREs face the following challenges regarding microservices in K8s in the cloud.

Developers toil to create security policies to their application

Organizations and different government bodies have set security policies and standards for application development, such as HIPAA and PII. It helps to ensure the security of data and applications in a dynamic threat landscape. A Web-based firewall (WAF) is not enough to secure data-in-transit. Developers have to write authentication and authorization policies in their business logic (or service) to avoid breach of data during the communication between other services.

It is particularly a headache for developers because of repeating effort. And wherever there is a new organizational policy for compliance, developers have to toil and ensure security changes are done for their system.

No central point to secure multicloud and multicluster applications

Other reasons such as diversity of technology and container versions makes the security local to the applications. It is a pain to enforce consistent security policies for applications across multiple clusters, cloud providers, and with various third-party services and APIs. While developers develop the security policies for their applications or services, the responsibility of securing traffic for multiple infrastructure remains a question.

Traffic management of microservice is a pain for cloud team

DevOps engineers, Cloud engineers or Infra engineers configure the communication logic of an application in the service manifest itself. Every time new services are deployed or any changes happen to existing services, the networking logic has to be changed. For example, if a new service has been deployed, the endpoint to the new service has to be manually added on all the other services that communicate with it. All the traffic management is a time-consuming process.

Struggle to monitor application performance (SREs)

It is essential to have real-time visibility into the health and performance of network infrastructure. It helps SREs to identify and respond to any issues or security threats emerging in the real-time traffic, and ensure the availability of services at all times.

Most of the microservices are distributed across cloud or Kubernetes clusters. Without a single plane of visibility of traffic- north-south and east-west- and the granular performance and behavioral details, it is challenging for SREs to troubleshoot and resolve issues quickly. This often leads to SLA breaches and service unavailability.

Istio solves all the above problems by abstracting the network and security logic from the application layer to its own infrastructure layer. This is done by injecting a sidecar (Envoy proxy) on pods, which in turn helps in managing complex, distributed networks at scale.

Let us now discuss Istio and its components and how it can help in simplifying network challenges for DevOps and architects.

What is Istio?

Istio is an open-source service mesh platform that simplifies and secures traffic between microservices. Istio provides a dedicated infrastructure for traffic management, security, and observability, to help developers handle the network of microservices in Kubernetes and multiple clouds, at scale.

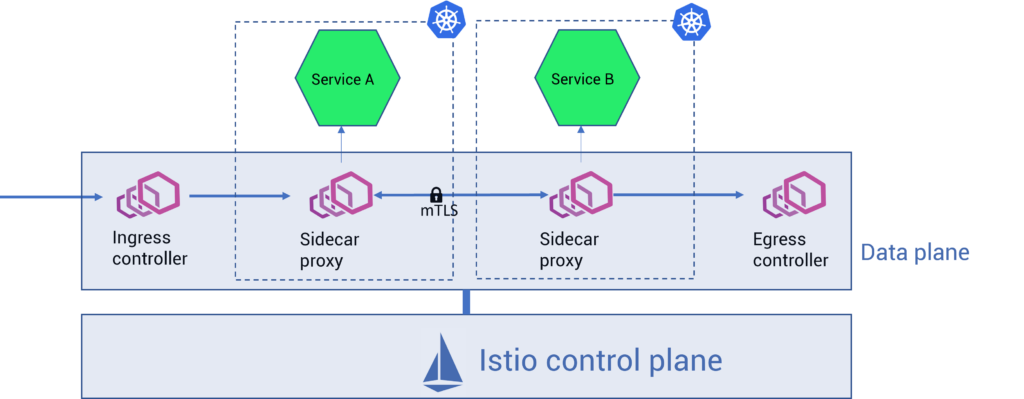

Istio works by deploying Envoy proxy- a L4 and L7 layer proxy- alongside each microservice. The proxy intercepts and handles service-to-service traffic, and thus abstracts communication logic from the service/application layer into a dedicated infrastructure layer (refer to fig. A).

Fig. A – Service-to-service communication before and after deploying Istio service mesh in a Kubernetes cluster

Istio components and sidecar architecture

Istio has two main components that control and manage the entire network infrastructure layer (refer to fig. B):

- Data plane: It is a network of Envoy proxies that handle the communication between services in the mesh. Envoy is a lightweight proxy deployed as a sidecar alongside each service in a mesh, which then intercepts the traffic between that particular service and other services.

Envoy proxy data plane routes and controls the request flow between services, apart from providing service discovery, security (mTLS), network resiliency (retries, timeouts, circuit breakers, fault injection), and observability (logs, traces, and metrics) features. - Control plane: The control plane interacts with the data plane and provides a centralized management and configuration layer for data plane proxies. That is, the control plane converts high-level routing rules that define traffic control behavior into Envoy-specific configurations.

Earlier control plane components were divided into Pilot, Galley, Citadel, and Mixer. Now, control plane functionalities are consolidated into a single binary called istiod. Istiod handles service discovery, configuration, and certificate management for service communication in the mesh.

Fig. B – Istio sidecar architecture

Although injecting Envoy proxies as sidecars is the go-to method to implement Istio, there are certain limitations to this approach. The primary limitation is that the operational cost of deploying and maintaining a sidecar is fixed, regardless of the complexity of the use cases.

That is, even if the use case is to achieve simple transport security or to configure complex L7 policies, they both require deploying and maintaining sidecars.

There are also other implementation and operational challenges with the sidecar model. To solve those challenges, Istio introduced ambient mesh — a sidecar-less implementation of Istio.

Istio ambient mesh

Istio ambient mesh is a modified and sidecar-less data plane for Istio. Ambient mesh takes a layered approach and splits the functionality of Istio into two: the secure overlay layer and the L7 processing layer.

- Secure overlay layer suits enterprises with comparatively minimal use cases such as routing, zero trust with mTLS, and L4 processing.

- The L7 processing layer helps organizations take advantage of the secure overlay, along with accessing advanced L7 processing and the full range of Istio capabilities.

This layered approach is useful for enterprises to adopt Istio incrementally.

(More on Istio ambient mesh and the difference between sidecar and sidecar-less Istio: Istio Ambient Mesh vs Istio Sidecar.)

Features of Istio service mesh

Istio provides several features for a variety of IT teams in an organization. Traffic management, security, observability, and extensibility are the major ones.

Traffic management

Istio automatically detects all the endpoints of respective services in the mesh and stores them in its internal service registry. It helps Istio to manage traffic by load balancing between replica pods.

Istio supports applying fine-grained control over traffic splitting between services, like routing a percentage of traffic to a specific service, i.e., canary deployment with Istio.

Besides, Istio provides the following network resilience and testing methods to ensure the reliability of the applications:

- Timeouts: It is the timeframe within which a service call succeeds or fails. Timeouts are useful so that services do not hang due to the Envoy proxy waiting for replies forever.

- Retries: It is the number of times Envoy should try to connect to a service when the initial connection attempt fails.

- Circuit breakers: It is the threshold for calls to specific hosts within a service. Once the threshold has been reached, further connections to the host are prevented.

- Fault injection: It is a testing method for systems where errors are deliberately introduced to verify their ability to recover from error conditions.

Explore the network management and resiliency features of Istio in detail: Traffic Management and Network Resiliency With Istio Service Mesh.

Security

Istio helps to secure the network of microservices by facilitating granular authentication, authorization, and access control policies.

- Authentication: Istio verifies the identity of users (humans or machines) by allowing peer-to-peer and request authentication policies.

- Peer authentication involves mTLS implementation, where communication between services is encrypted and authenticated using certificates issued for both the client and the server.

- Request authentication involves server-side verification, where the client has to attach JWT (JSON Web Token) to the request.

- Authorization: Verifying whether the authenticated user is allowed to access a server and perform specific action is done using authorization policies in Istio. Authorization policies can be set to allow, deny, or perform custom actions against an incoming request based on different parameters.

- Access control: Istio controls the access of authorized users to resources by implementing the least privilege policy and role-based access control (RBAC). Istio supports RBAC policies to be set on method, service, and namespace levels.

Istio helps in further securing the network by automating key and certificate rotation at scale.

Observability

Istio offers observability and real-time visibility into the performance and behavior of applications. It is done by providing detailed telemetry for traffic flow between services in the mesh.

Istio generates the following types of telemetry:

- Metrics: Istio generates service metrics for real-time performance monitoring of services. They are based on the four “golden signals” of monitoring, which are latency, traffic, errors, and saturation.

- Distributed traces: Istio collects traces of activity across multiple services in a mesh to better understand service dependency and traffic flow.

- Access logs: Istio can produce a complete record of communication between services in the mesh, making it easier to understand the behavior of each workload.

Apart from providing add-ons for tools such as Prometheus, Grafana, Kiali, and Jaeger, Istio can be integrated with Apache Skywalking for unified observability.

Extensibility

Istio provides the ability to extend proxy functionality using WebAssembly (Wasm), which is a sandboxing technology that can be used to extend the Istio proxy (Envoy). Istio does it by replacing the primary extension mechanism in it called Mixer.

With Wasm, users can build support for new protocols, custom metrics, loggers, and other filters. And these Wasm modules can be distributed dynamically at runtime.

The following are some of the open-source projects that have emerged over Istio using WASM:

- Slime – an intelligent service manager to use Istio and Envoy

- MOSN – provides cloud-native edge gateways and agents

- Aeraki – provides support for all the L7 protocols apart from HTTPs and gRPC

Benefits of Istio service mesh

Istio is considered to be resource-intensive and it has a bit of a learning curve. But the benefits Istio provides outweigh them all. Below are some major benefits of implementing Istio:

- 5X increased developer experience: Using Istio, developers are free to work on business logic rather than writing complex network rules. This ensures better productivity, fosters innovation and improves developer experience.

- 100% zero trust network security: Istio allows DevOps engineers and security managers to set granular security policies for enforcing strict authentication and authorization for communication between services. Coupled with mTLS-based traffic encryption, these significantly improve the security posture of the infrastructure. The centralized way of enforcing policies provided by Istio makes compliance easier for security teams, and the policies work across cluster boundaries. This allows them to seamlessly implement a zero trust network (ZTN) for microservices.

- Zero-hassle progressive delivery: With its ability to perform flexible and fine-grained traffic splitting between services, Istio helps DevOps engineers to perform progressive delivery canary and blue-green releases without any hassle.

- 10X faster audit: Access logs provided by Istio help Auditors analyze the performance of the network over a period of time. The telemetry data helps them quickly identify performance bottlenecks and provide suggestions for improvements.

- 4X faster MTTR from network failures: Istio helps SREs and Ops teams to have observability and real-time visibility of microservices networks in the cloud. The telemetry data provided by Istio is useful for them to diagnose and troubleshoot any errors, and restore services as early as possible. The data provides SREs and Ops with end-to-end visibility into the request flow and dependencies between services, enabling them to have multicluster and multicloud visibility to analyze the performance and behavior of applications.

- 99.99% Resilient infrastructure: With advanced load balancing and traffic management capabilities, cloud architects can ensure a highly available and high-performance network infrastructure for production.

How to get started with Istio

Watch the video below to get started with Istio in a matter of a few minutes. The video covers a demo, showing the following items:

- Installing Istio

- Preparing namespace for Istio onboarding (enabling automated Envoy proxy injection)

- Deploying a demo application

- Accessing the demo application from outside the cluster by deploying the Istio ingress gateway

- Visualizing the traffic flow between services using the Kiali dashboard

If you would like to be thorough with Istio fundamentals with hands-on demos, check out our Istio certification course on Udemy.

And if you would like to implement Istio enterprise-wide but are facing challenges, check out our Istio service mesh vendor evaluation guide.